Architectural Constraints Nobody Talks About

by Nadzeya Stalbouskaya

Despite heavy investment in artificial intelligence, most enterprises struggle to move AI initiatives beyond the pilot stage. Industry research consistently shows that 70–80% of AI projects never reach sustained production use. While this failure is often attributed to data quality, skills shortages, or immature tooling, these explanations only describe symptoms.

This article argues that the primary limiting factor is enterprise architecture. AI pilots fail to scale because they are built on architectural foundations that were never designed for learning systems, probabilistic decision-making, or continuous change. By examining data architecture, integration patterns, platform design, and governance, this article provides a structured view of the architectural constraints that prevent AI from becoming a reliable enterprise capability.

The AI Pilot Scaling Problem

Over the last decade, organizations have accumulated substantial experience running AI pilots. Typical use cases include demand forecasting, recommendation engines, fraud detection, customer support chatbots, and predictive maintenance. In controlled environments, these pilots often demonstrate strong results.

However, multiple studies confirm that only a small fraction of pilot’s transition into scaled production systems.

- Harvard Business Review reports that over 80% of AI projects fail to deliver business value at scale.

- MIT Sloan Management Review highlights that most AI initiatives stall during operationalization rather than experimentation.

- McKinsey identifies “operational and architectural readiness” as a key blocker to AI value realization.

The recurring pattern suggests a systemic issue rather than isolated implementation failures.

Why AI Pilots Work and Production Does Not

AI pilots typically succeed because they operate under conditions that do not exist in production environments.

Pilots are commonly characterized by:

- Isolated execution environments

- Manually curated datasets

- Limited integration scope

- Absence of strict SLAs

- Minimal governance and compliance requirements

Production systems, by contrast, must operate within complex enterprise landscapes. They must consume live data, integrate with legacy platforms, comply with security and regulatory requirements, control costs, and remain resilient under failure.

The transition from pilot to production exposes architectural assumptions that were never validated. This paper outlines some of the fundamental Architectural Debts which constitutes pivotal reasons for AI pilot failures.

Architectural Constraint 1:

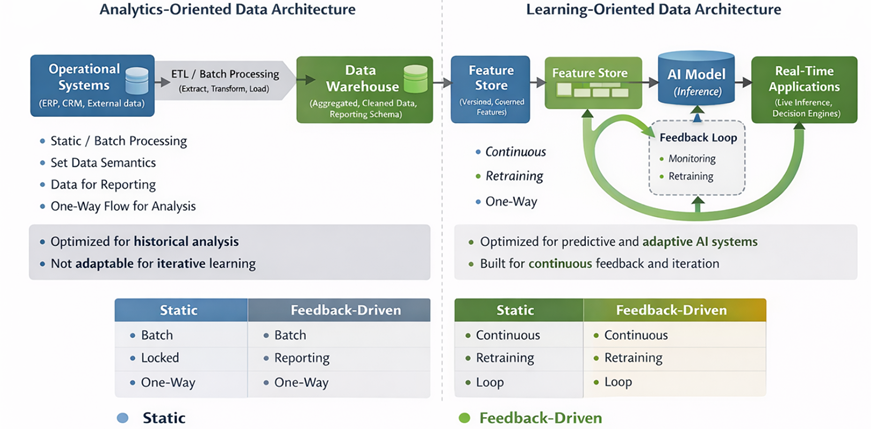

Data Architecture Designed for Analytics, Not Learning

Most enterprise data platforms were designed to support reporting and business intelligence. These platforms optimize for historical analysis, aggregation, and human interpretation.

AI systems require fundamentally different architectural properties:

- Stable data semantics over time

- Versioned datasets

- Clear ownership of data meaning

- Feedback loops from production inference back to training

In practice, AI teams often reuse reporting datasets or analytical views. This creates hidden risks:

- Schema changes propagate without visibility

- Business logic embedded in ETL pipelines alters data meaning

- Data drift accumulates silently

When model performance degrades, teams focus on retraining rather than addressing the underlying data architecture. As Gartner and DAMA-DMBOK both note, data architecture that lacks semantic governance cannot support scalable AI.

Analytics-Oriented Data Architecture vs Learning-Oriented Data Architecture

Architectural Constraint 2:

Separation of Training and Production Pipelines

A common architectural anti-pattern is the separation of training and production pipelines across teams, tools, or platforms.

Typical consequences include:

- Duplicate feature engineering logic

- Diverging transformation rules

- Different latency and freshness characteristics

- Inconsistent data quality controls

This leads to a situation where the model behaves correctly relative to its training data but unpredictably in production. While often labeled as “model drift,” the root cause is architectural inconsistency.

Both Google’s ML Engineering guidelines and the ISO/IEC 5338 draft on AI lifecycle management emphasize that training and inference pipelines must be treated as a single architectural system.

| Aspect | Training Pipeline | Production Pipeline | Risk |

| Data source | Historical snapshots | Live streams | Semantic drift |

| Transformations | Notebook-based | ETL-based | Logic mismatch |

| Ownership | Data science | Platform / IT | Accountability gaps |

Architectural Constraint 3:

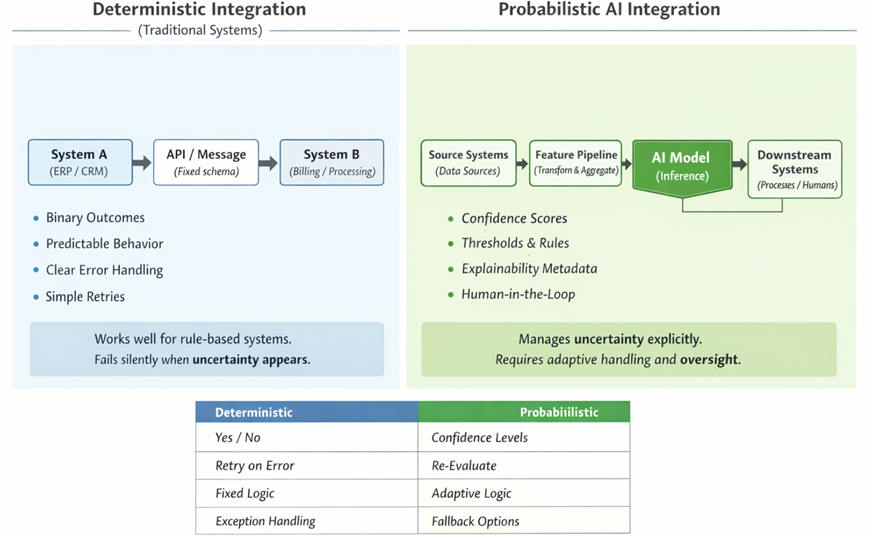

Integration Patterns Not Designed for Probabilistic Systems

Enterprise integration architectures traditionally assume deterministic behavior. Downstream systems expect clear inputs, predictable outputs, and well-defined error handling.

AI systems are probabilistic by nature. They produce confidence scores, uncertainty ranges, and non-repeatable outcomes.

This mismatch creates architectural tension:

- Downstream systems cannot interpret confidence levels

- Retry and fallback behavior is undefined

- Responsibility for decisions is unclear

Point-to-point integrations may work for pilots but scale poorly. As NIST AI Risk Management Framework and ISO/IEC 42001 both highlight, lack of architectural contracts for probabilistic behavior increases operational and risk exposure.

Deterministic Integration vs Probabilistic AI Integration

Architectural Constraint 4:

Platforms Built for Stability, Not Continuous Change

Traditional enterprise platforms prioritize stability and predictability. AI systems require controlled and continuous change.

Production AI systems must support:

- Model versioning and rollback

- Continuous retraining

- Performance and bias monitoring

- Cost transparency and optimization

| Capability | Pilot Assumption | Production Requirement |

| Deployment | One-time | Continuous |

| Monitoring | Manual | Automated |

| Cost control | Ignored | Mandatory |

| Rollback | Not needed | Critical |

Most pilots assume a single model and static deployment. At scale, this assumption leads to operational debt. As McKinsey and ThoughtWorks note, organizations that lack platform-level AI capabilities experience rapid cost escalation and reduced reliability.

Architectural Constraint 5:

Governance Introduced After the Pilot

In many organizations, governance is introduced only after a pilot demonstrates value. Security, risk, and compliance teams are engaged late in the lifecycle.

By that point:

- Data lineage is incomplete

- Decisions are not reproducible

- Accountability is unclear

Both the EU AI Act and ISO/IEC 42001 emphasize that governance must be embedded into system design, not added as a post-hoc control. Late governance is one of the most common reasons pilots are stopped before scaling.

Architecture as the Scaling Mechanism

Scaling AI is not primarily a data science challenge. It is an architectural one.

Organizations that scale AI successfully treat it as:

- A system capability, not a feature

- A change in decision-making architecture

- A new operational and risk domain

They invest early in:

- End-to-end data lifecycle design

- Explicit ownership and accountability

- Integration contracts that handle uncertainty

- Platforms designed for continuous learning

These are architectural decisions, not tooling choices.

In Summary

AI pilots fail to scale not because the models are weak, but because the enterprise architecture beneath them is fragmented, opaque, and unprepared for learning systems.

AI exposes architectural debt in data, integration, platforms, and governance. Organizations that address these constraints early turn AI from an experiment into a sustainable capability. Those that do not will continue to accumulate pilots that never become products.

In the era of AI, enterprise architecture no longer supported discipline. It is the prime as a scaling factor.

About the Author

Nadzeya Stalbouskaya is an award-winning Technology Architect, prolific author, and recognized international conference speaker. With numerous publications across respected global journals and magazines, she is widely regarded as one of the emerging voices shaping the future of enterprise architecture and digital transformation. Nadzeya is an active member of leading industry organizations, serving as ambassador and advisor to global communities where she promotes knowledge exchange, governance excellence, and innovative architectural thinking. She has spoken at some of the most prestigious events in Europe, inspiring thousands of professionals with practical strategies for addressing architecture debt, building resilient systems, and accelerating business transformation.

Her approach? Strategy. Architecture. Elegance of approach.